The Client

This innovative and highly profitable game design workshop, with over a million players and diverse product lines, stands out as a pioneer in social gaming.

The client’s platform is a highly scalable, highly available distributed system built on the AWS global cloud. With players all over the world, they leverage the power of AWS services to deliver a seamless customer experience via a rapid development lifecycle.

The volume of traffic and the demands of analytics, compliance and reporting on a gaming platform means that the systems generate millions of records every hour. Sitting on top of this is a sophisticated suite of financial and operational analytics.

Our client's platform is a highly scalable, highly available distributed system built on the AWS global cloud.

Our client's platform is a highly scalable, highly available distributed system built on the AWS global cloud.The Challenge

Building a scalable data platform to integrate with third party systems for insight, reporting and regulatory purposes was pivotal to the organisation’s strategy.

In their journey of building trustable, timely and accurate data the client needed specific expertise to engineer robust and reliable data automation.

They turned to Mechanical Rock as a long-term trusted partner in software and data engineering to help them with this challenge.

The Solution

Mechanical Rock worked together with the client’s engineering team to build data engineering capability based on industry leading practices:

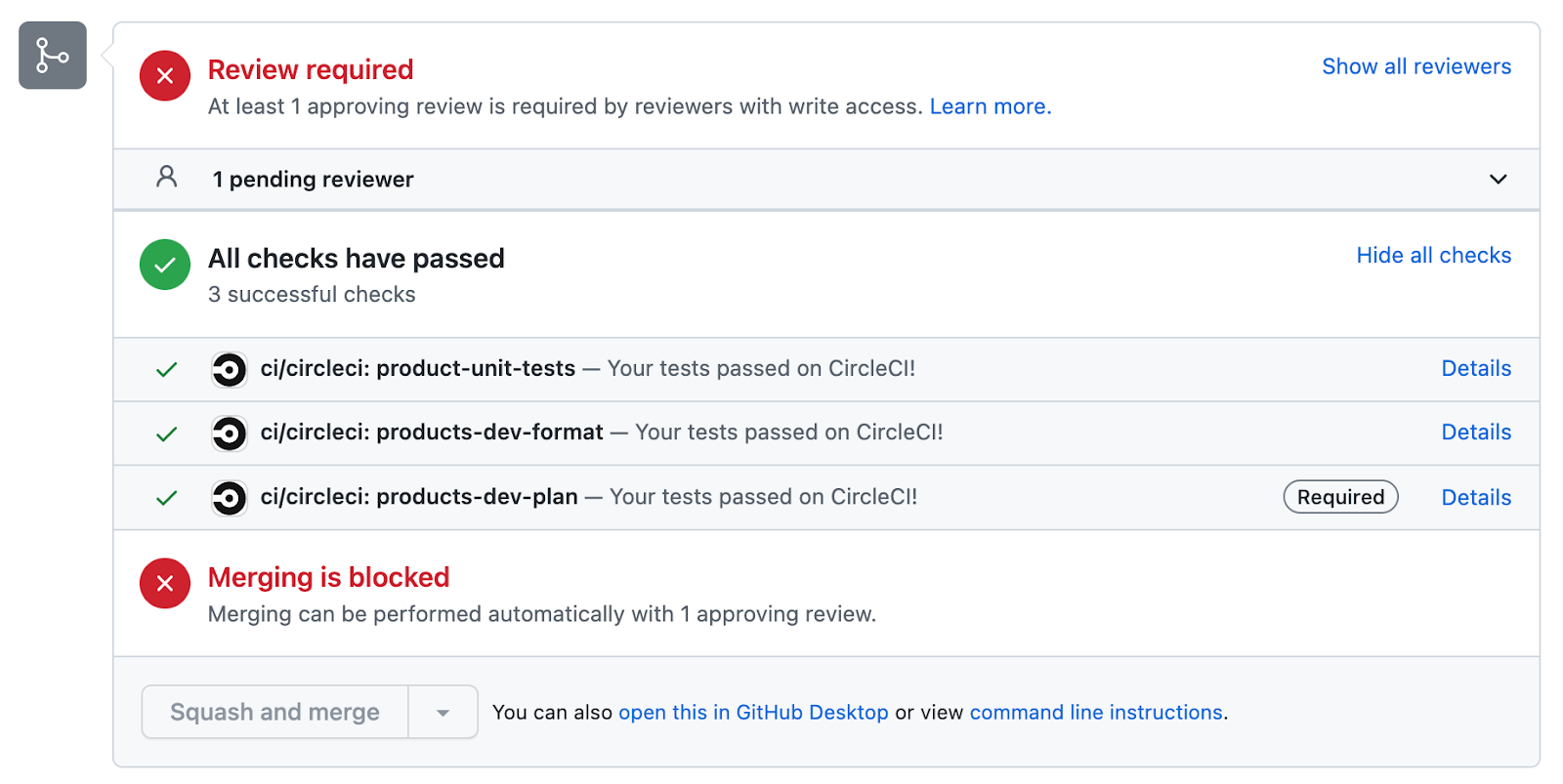

- Continuous Integration and Delivery (CI/CD) of the entire stack including building infrastructure and deployment of data pipelines

- Infrastructure-as-code deployment of all AWS and Databricks resources using terraform providers, leading to more repeatable deployments and less configuration drift

- Trunk based development for shorter lead times, faster feedback and more reliable change cycles

- Local development including unit tests to give data engineers confidence by allowing them to test transformation logic before committing their code

- Sandboxes to run transformations against the actual data without impacting production workloads

- Automated testing including unit tests, data quality checks and data freshness checks, ensuring the quality of the solution going forward

- Strong security including integration with a third party identity provider, setting up VPC, network firewalls, and a new ‘least-privileged’ Roles Based Access Model (RBAC)

By expanding the client's Databricks ecosystem, we optimised data analytics to unlock scalable insights. This streamlining of workflows accelerated analysis, thereby enhancing efficiency and agility.

We help build data engineering capability based on industry leading practices.

We help build data engineering capability based on industry leading practices.The Benefits

- Developed 3 new data pipelines with 43 ETL jobs in just 2 weeks

- Averaged 4 production releases per day

- Automated testing on every change to the system

- Improved data quality through automated checks

- Improved reliability through pipeline alerting & monitoring

- Faster feedback loops for data engineers

- Empowering the engineering team to move faster and fail faster by testing their changes using actual data without impacting production